Nine years ago today, Steve Jobs passed away. I don’t know about you, but I still feel the void he left behind.

If you’ve been following me for a while, you already know I’ve always preferred the way Jobs led Apple over the way Cook has been leading the company since he became CEO. This is entirely personal preferences’ territory, and I’m not asking you to agree with me or feel what I feel. This thinking-out-loud post you’re reading is just made of feelings and impressions, not of points to be ‘right’ or ‘wrong’ about.

Apple is undeniably in great health today. When Steve left us, I remember reading a lot of drivel about Apple being irremediably doomed now that its charismatic, visionary founder had died. I never believed that for a second. I knew Apple was in good hands, because the team of executives was made of very capable people.

There’s no denying that Tim Cook has done a stellar job in keeping the huge Apple ship afloat. Whenever I discuss Cook’s Apple versus Jobs’s Apple, the common ground I find with people who disagree with me is that they’re both great Apples in different ways. And I genuinely believe that.

But… I don’t like Cook’s Apple.

Since I became an Apple user back in 1989, I’ve always felt there was more to it than just being a returning customer of a tech company. There was a sense of belonging to a common set of principles that went against the mainstream. That was extremely appealing for someone like me who always moved countercurrent to everything. There were the Mac user groups, places (whether physical or online) to share a passion with like-minded people. There was the idea of ‘thinking differently’ way before it was formalised in 1997 by Apple itself. Apple products weren’t just computers and peripherals, but specialised tools made for people with a creative, think-out-of-the-box mindset.

We Mac users were a minority, but we all had that tongue-in-cheek snobbism, like, We’re the cool guys, we’re the tech élite. But even in my years as an informal Apple evangelist, I never tricked anybody into leaving Windows and PCs behind and become Apple users because I was conditioned or because I was a thoughtless zealot who kept interfering with others’ work to the tune of “Get a Macintosh, you fool!” When someone asked me for technical advice, I first tried to help them out with whatever platform they were using. If I noticed that what they were trying to accomplish would have been better achieved by using a Macintosh, then I suggested it, explaining the different approach and the logic behind the Mac user interface, et cetera. If they decided to ‘switch’, then I would help them out in any way I could. Other fellow evangelists were less respectful. But I’m starting to digress.

When Apple was on the brink of bankruptcy in the mid-1990s, that sense of community grew even stronger. We Mac users were on this metaphorical Titanic, worried sick about the future of the company and our beloved machines, trying to stick together and help one another. The first emails I’ve ever written were about giving people advice on which Mac software and hardware to get (and where), to make the Macs they were currently using as future-proof as possible in case Apple went away. Looking back, those were really thrilling times.

But then Apple acquired NeXT, and shortly after Steve Jobs was back, and shortly after came the iMac, the iBook… even more thrilling times. And Jobs’s way of bringing Apple from almost-bankruptcy to exceptional success, the way he commandeered the ship and the way he led it from that moment on, truly reinforced that sense of belonging, that feeling of participation — as an Apple user — to that amazing Underdog’s tale.

Ever since Steve Jobs passed away, that something special of being an Apple user has quickly faded away. The thrill is gone. Cook’s Apple is an all-business money machine I feel less and less attached to. When Apple was at that intersection of technology and liberal arts Steve talked about, I was there. I recognised myself. Jobs’s Apple was a company that wasn’t made uniquely of tech-heads and led by business people, but a place where incredible design and masterful engineering met, a place led by a man who was eclectic enough to understand both fields intimately and get the product formulas right so very often. He put his soul in the products he envisaged. He put the fun element. He was the only one who could get away with using the word ‘magic’ in his presentations — because he gave meaning to it. He was the first to be amazed by the final product when he introduced it on stage. I’ve said it countless times, but there was a genuineness in Steve’s presentations that betrayed his underlying passion. And that passion was contagious.

Whenever he went a little off-script to talk about Apple’s values and Apple’s way of doing things, you knew he was all-in, you knew it wasn’t just empty, corporate talk you often hear when company executives harp on about their company’s ‘mission’. Steve’s legacy is a bunch of executives with contrived, unconvincing smiles, parroting phrases from the Good Book of Apple at every keynote and event. They go through the motions, they present their homework, and that’s it. Today, whenever I see a keynote segment end, I’m always left with the feeling that the presenter will immediately stop smiling as soon as they’re off camera, instead breathing a sigh of relief that their part is over. The show is over.

Despite those still bringing up Antennagate whenever you discuss Jobs’s style of leadership, Jobs’s Apple had more respect for its customers and developers than today’s Apple.

I’ve already brought this up in a 2016 post, but since I like my readers to stay on the page instead of constantly following external links, I’ll re-publish this entirely, because it bears repeating. In the last part of Jobs’s keynote at Macworld Expo in San Francisco in 2000 (a milestone, since it’s when Mac OS X was first introduced), before the ‘one more thing’ segment, Jobs took a moment to talk a bit about ‘The big picture’, and about what makes Apple, Apple:

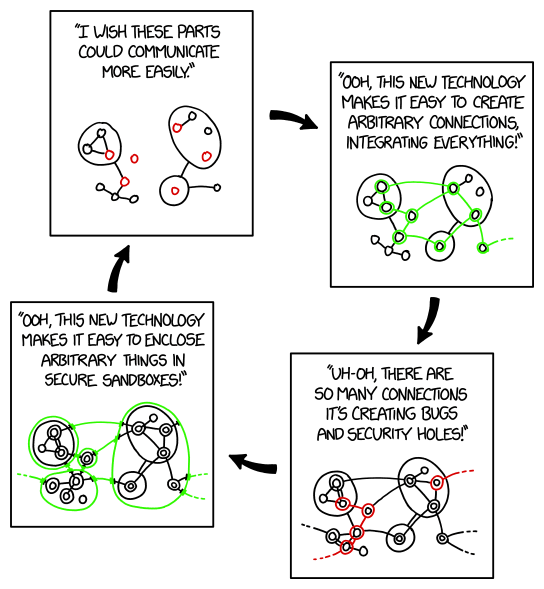

I want to zoom out and talk about the big picture of how we see all of these things play together. You know, I remember two and a half years ago when I got back to Apple, there were people throwing spears saying “Apple is the last vertically integrated personal computer manufacturer, it should be broken up into a hardware company, a software company, what have you,” and— it’s true, that Apple is the last company in our industry that makes the whole widget, but what that also means [is that] if managed properly, it’s the last company in our industry that can take responsibility for customer experience. There’s nobody left!

And it also means that we don’t have to get ten companies in a room to agree on everything to innovate. We can decide ourselves to place our bets like we did for USB on the original iMac; hardware — let’s build it in; software — let’s build it in; marketing — let’s go evangelise it to the developers and tell our customers why it’s better. And let’s not wait three years for an agreement — and now Apple is leading in USB. Desktop movies — let’s take our hardware and put FireWire ports in iMac, let’s write applications called iMovie that take advantage of QuickTime and allow us to do these things, and let’s go market it, so people can understand this and see how easy it is to use. There’s no other company left in this industry that can bring innovation to the marketplace like Apple can.

So, we really care deeply about the hardware, we think this is where everything starts, and we got again the finest hardware lineup in Apple’s history. We’re so proud of these products. But we also do software at Apple. Again, we own the second-highest-volume operating system in the world and one of only two high-volume operating systems in the world. We make a lot of other software: Mac OS X coming, iMovie, et cetera. And the greatest thing is when we put them together, and we integrate them, like the examples I just gave you, like iMovie and the new iMacs, seamlessly integrated into desktop movies. Another example is AirPort, where we could seamlessly integrate this whole new wireless networking technology into our OS, so when you plug in an AirPort card in your iBook you don’t have to spend half an hour flipping settings. It just — boom! — pops to life and works.

This is the kind of innovation we can bring through this integration. And now we’re adding Internet stuff. We got our first four iTools today, that wouldn’t be possible if we couldn’t take unfair advantage of the fact that we supplied OS 9, the client operating system, and so that our servers and our clients could work together in a more intimate way than anyone else can do. And so we’re gonna integrate these things together in ways that no one else in this industry can do, to provide a seamless user experience where the whole is greater than the sum of the parts. And we’re the last guys left in this industry that can do it. And that’s what we’re about.

These are words from someone who wants to build something that is better for everybody involved — and he fucking means it. And this is also the core of a certain culture which, unfortunately, has been crumbling at and around Apple since he passed away. A culture where customers feel they’re respected and take care of, where developers feel motivated, inspired, and incentivised to play their part in this big picture. Just read Jesper’s recent piece titled Home to better understand what I’m talking about.

Jobs and his Apple cared for the products but also the whole experience surrounding them. They had to be superior products not because they simply exude a ‘premium feel’ and superficial prettiness. But because what drives them — the software, the user interface — is as essential to the whole experience as the hardware. Today, does Apple still “take responsibility for customer experience”? Does Apple provide today a “seamless user experience where the whole is greater than the sum of the parts”? I don’t feel that. The user experience is not seamless, and the whole is a sleek clash of all of its parts. If Apple cared about customer experience, they wouldn’t have taken four years to rectify the disaster that was the MacBook keyboard’s butterfly mechanism.

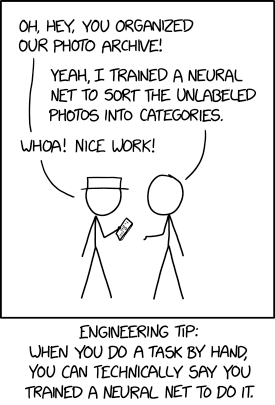

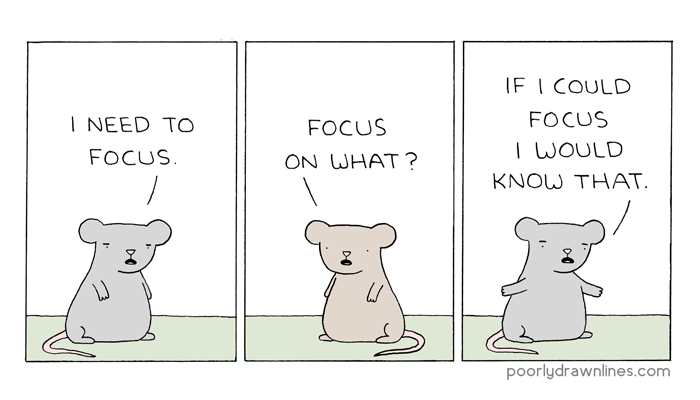

Today’s Apple is business first, engineering second, design third. It’s led by someone who has no charisma or vision. Hey, nothing wrong with that, but a smart move would be to keep those who possess both such traits close to yourself instead of pushing them away (people like Jonathan Ive and Scott Forstall, for example). Today’s Apple is ‘team effort’. It’s a very well maintained entity by many capable maintainers who have perfected the art of iterating, at least as far as hardware is concerned. But where are the architects?

Software-wise, especially when it comes to Mac OS, I don’t see any. Those who are in software design seem to have forgotten how to make a great operating system with a well-crafted, thoughtful user interface. I don’t feel a strong direction here; just repeated attempts and a visible trial-and-error approach. And I totally share John Gruber’s concern when he writes:

My biggest question and deepest concern regarding Apple’s leadership, especially now that Ive is gone and Phil Schiller has moved on to a fellowship with only the App Store and events on his plate, is whose taste is driving product development? We know the actors, we know the writers, we know the cinematographers, but who is directing? Who is saying “This isn’t good enough” — or in the words of Apple’s former director, “This is shit”? When a product decision comes down to this or that, who is making that call?

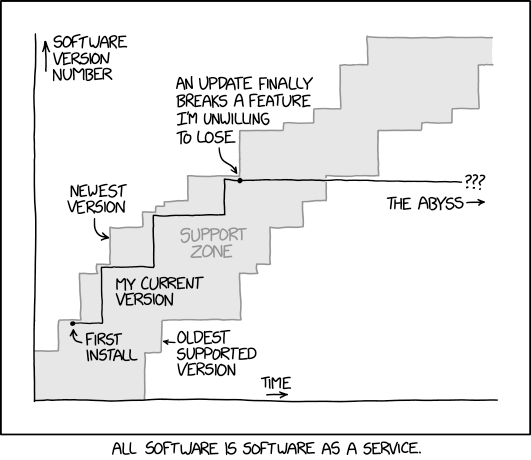

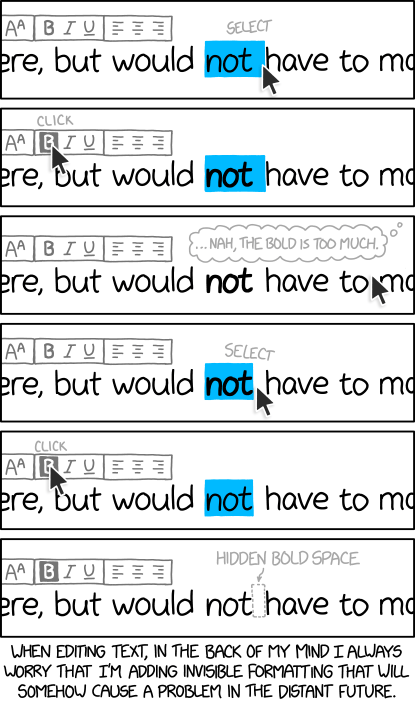

As I’ve repeatedly stated in my observations about Big Sur now that I’ve been testing the betas since August, the next version of Mac OS shines when it comes to performance, responsiveness, and stability — that’s my experience, at least — but when we examine the look and feel of its user interface, it mostly feels directionless. Where is the purpose? Why these changes? Is it to make the interface more usable? Is it to make that interaction work better or to make that element just look sleeker? It’s often hard to see the intention or even the logic behind some of them. The background colour of the System Preferences pane has subtly changed at least three times in the course of five betas. Things you used to make with one click, now take two or more clicks, just because someone at Apple felt like touching up a certain part of the interface for no apparent reason other than ‘trying something different’ or ‘fixing a previous, equally arbitrary cosmetic change’.

Today’s Apple is led by an expert in supply chain management. And that’s how Apple treats their developers — like cogs in the supply chain. And customers? The goal with customers seems to be to maximise the lock-in. From the Catalina issues mail folder with all the feedback I’ve received over the past months, I could extrapolate hundreds of stories of customers who are still using Apple products not because “There’s nothing better, man” or because “Apple gets me, so of course I’m using Mac” — responses I used to hear all the time back in the old days — but because “Eh, today Apple’s the lesser evil”, or “I’m too deep in Apple’s ecosystem to even begin thinking about alternatives”, or “If it weren’t for [app x] that’s my favourite tool for [task y], I would have switched to Windows already”.

Now, before the keyboard warriors start sending me hate mail, let me reiterate a point I made earlier. I recognise and acknowledge all the good products and the good initiatives and the positive stances that are coming from Apple today. I still use plenty of Apple products and I still trust Apple more than other tech companies especially due to the importance Apple is giving to privacy. And…

(let me copy & paste this bit from the beginning of this piece)

…There’s no denying that Tim Cook has done a stellar job in keeping the huge Apple ship afloat. Whenever I discuss Cook’s Apple versus Jobs’s Apple, the common ground I find with people who disagree with me is that they’re both great Apples in different ways. And I genuinely believe that.

But… I don’t like Cook’s Apple.

The void Steve Jobs left behind nine years ago is not limited to Apple, but extends to the whole tech industry. Things today are okay — there is still the occasional product or innovation that enthuses me, of course. For certain aspects I find Microsoft — Microsoft! — to be a more daring and interesting player. But without the colourful, rebellious spot that was Jobs’s Apple, the current technology landscape feels desaturated and mostly ’corporate’, if you feel what I feel.

Technology alone is not enough, the man said. But in this sort of post-apocalyptic tech landscape, technology seems to be all that’s left.